How Edge AI Systems Are Typically Deployed On-Site

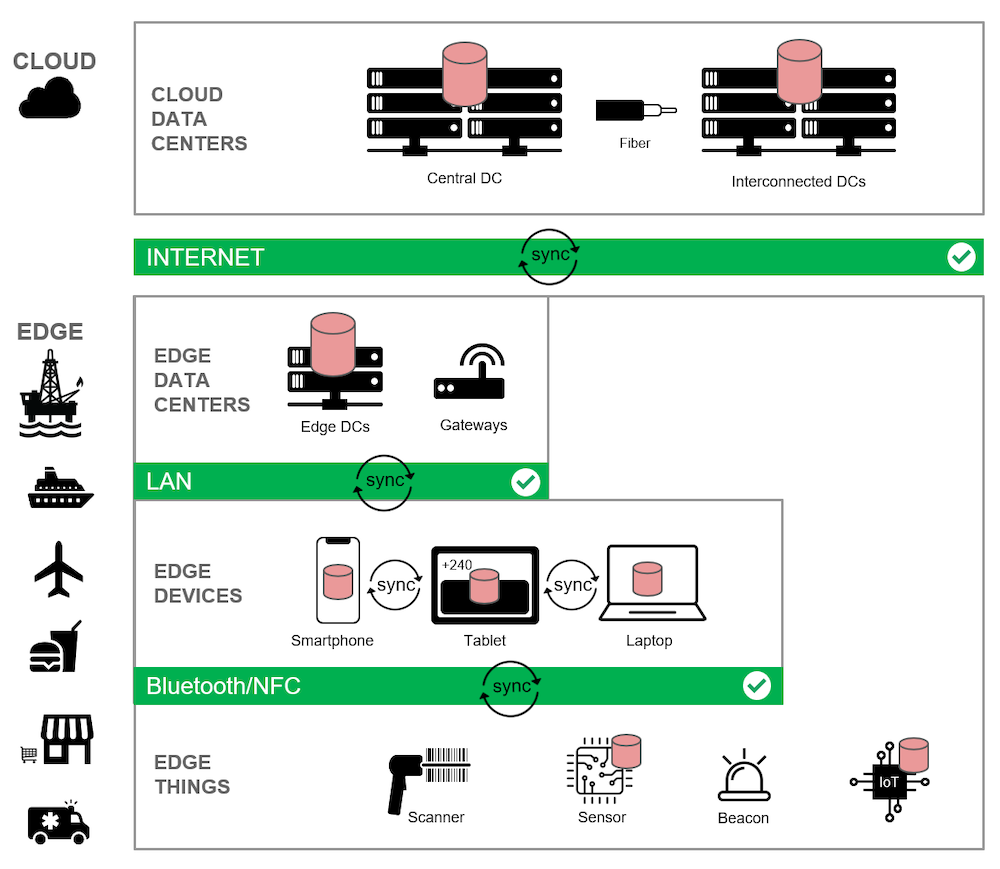

In real-world environments, Edge AI systems are designed to operate close to data sources such as cameras and sensors.

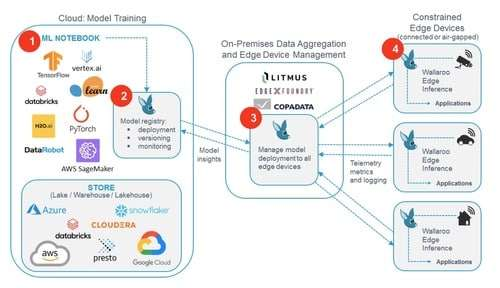

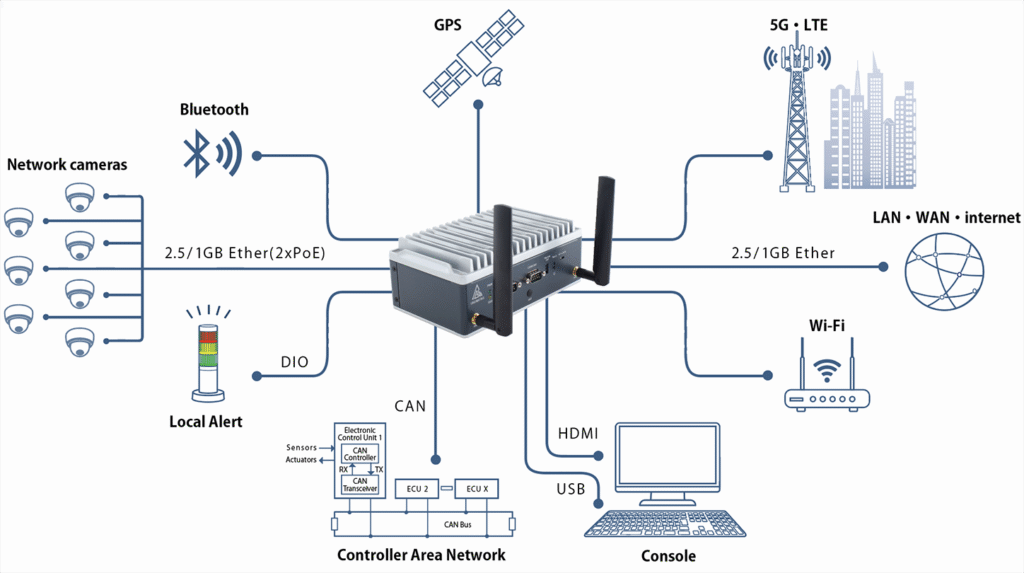

A typical deployment includes local computing hardware responsible for running AI inference, interfacing with multiple input sources, and delivering results to on-site dashboards or management systems. In many cases, a dedicated Edge AI Box is commonly used as the local computing unit within this architecture.

From an operational perspective, data usually flows from cameras or sensors to the edge device, where inference is performed locally. Alerts, events, or metadata are then transmitted to local dashboards or control systems, enabling fast response without exposing raw data externally.

These systems are optimized for long-term stability, efficient power consumption, and flexible integration with existing infrastructure. Local deployment allows Edge AI systems to continue operating independently within a local network, even when external connectivity is limited or unavailable.

Understanding typical deployment architectures helps organizations design Edge AI systems that are scalable, maintainable, and suited to real operational conditions.

This article is part of DAO EDGE’s insights on practical Edge AI deployment.